setProperty ( "driver", jdbcDriver ) jdbcProps. DriverĬreate 2 Postgresql tables which I plan to populate and join for demonstration purposes % jdbc CREATE TABLE accounts ( id serial PRIMARY KEY, name VARCHAR ( 50 ) UNIQUE NOT NULL ) CREATE TABLE reports ( id serial PRIMARY KEY, account_id integer NOT NULL, name VARCHAR ( 50 ) NOT NULL ) ĭefine credentials in Scala code and create an initial JDBC DataFrame connection variable for reading and writing % spark val jdbcUrl = "jdbc:postgresql://localhost:5432/spark_data" val jdbcDriver = "" val jdbcUser = "spark_data" val jdbcPassword = "spark_data" import val jdbcProps = new Properties () jdbcProps. jarĮnsure the Postgresql driver is loaded % spark Class. 2 - bin - all / interpreter / jdbc / postgresql - 9.4 - 1201 - jdbc41. jars / Users / eric / Documents / code / spark / zeppelin / zeppelin - 0.8.

In my first paragraph, I included the postgresql JDBC driver jar, ex: % spark. I added a new Zeppelin Notebook (with default interpreter: spark/scala) and began adding paragraphs. I added my postgresql credentials for the jdbc interpreter Browse to:ĭefault.url: jdbc:postgresql://localhost:5432/ Part 4: Scala and Spark development via Zeppelin # ensure ENV var is set to the extracted path, example: export ZEPPELIN_HOME =/Users/eric/Documents/code/spark/zeppelin/zeppelin-0.8.2-bin-all # browse to, download zeppelin-0.8.2-bin-all.tgz # extract tar -xzf zeppelin-0.8.2-bin-all.tgz I encountered an issue installing Apache Zeppelin via homebrew, so I manually downloaded the full package. > alter user spark_data with encrypted password 'spark_data' > grant all privileges on database spark_data to spark_data Postgresql started eric /Users/eric/Library/LaunchAgents/Ĭreate a Postgresql database and user for Spark development createdb spark_data # run example $HADOOP_HOME/bin/hadoop jar $HADOOP_HOME/share/hadoop/mapreduce/hadoop-mapreduce-examples-3.2.1.jar grep input output 'dfs+' # inspect results $HADOOP_HOME/bin/hdfs dfs -cat output/part-r-00000Įnsure Postgresql is running brew services list | egrep -i "(^Name|postgresql)" $HADOOP_HOME/bin/hdfs dfs -put $HADOOP_HOME/etc/hadoop/ *.xml input $HADOOP_HOME/bin/hdfs dfs -mkdir /user/ $(whoami ) # copy some test files $HADOOP_HOME/bin/hdfs dfs -mkdir input Test HDFS, Hadoop, MapReduce: # make HDFS directories to execute MadReduce jobs $HADOOP_HOME/bin/hdfs dfs -mkdir /user # Start ResourceManager daemon and NodeManager daemon $HADOOP_HOME/sbin/start-yarn.sh Prepare HDFS and start Hadoop services # format HDFS filesystem $HADOOP_HOME/bin/hdfs namenode -format # Start NameNode daemon and DataNode daemon $HADOOP_HOME/sbin/start-dfs.sh Yarn-site.xml -services mapreduce_shuffle -whitelist JAVA_HOME,HADOOP_COMMON_HOME,HADOOP_HDFS_HOME,HADOOP_CONF_DIR,CLASSPATH_PREPEND_DISTCACHE,HADOOP_YARN_HOME,HADOOP_MAPRED_HOME Mapred-site.xml yarn $HADOOP_MAPRED_HOME/share/hadoop/mapreduce/*:$HADOOP_MAPRED_HOME/share/hadoop/mapreduce/lib/*

cat ~/.ssh/id_rsa.pub > ~/.ssh/authorized_keysĬhanges I made to Hadoop configuration files, located in $HADOOP_CONF_DIRĬore-site.xml fs.defaultFS hdfs://localhost:9000 # environment variables added to ~/.profile export JAVA_HOME = " $(/usr/libexec/java_home ) " export HADOOP_HOME =/usr/local/Cellar/hadoop/3.2.1/libexecĮxport HADOOP_MAPRED_HOME =/usr/local/Cellar/hadoop/3.2.1/libexecĮxport HADOOP_CONF_DIR = $HADOOP_HOME/etc/hadoopĮxport SPARK_HOME =/usr/local/Cellar/apache-spark/2.4.4/libexecĮxport ZEPPELIN_HOME =/Users/eric/Documents/code/spark/zeppelin/zeppelin-0.8.2-bin-allĮxport PATH = " $PATH :/usr/local/opt/scala/bin"Įnsure you can ssh to localhost without a password.

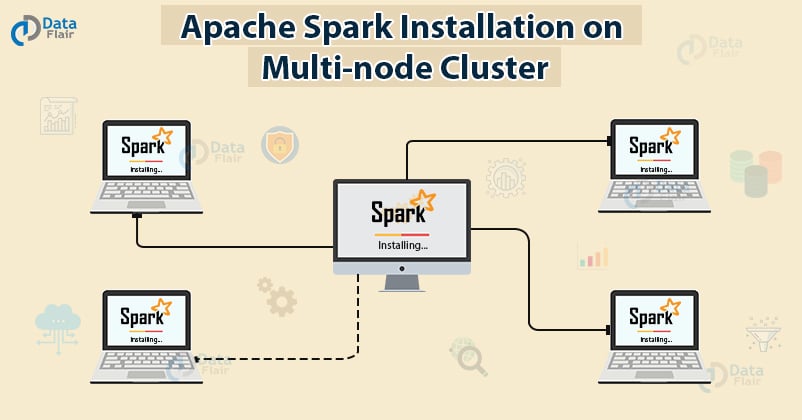

Java HotSpot (TM ) 64-Bit Server VM (build 25.241-b07, mixed mode ) # show installed brew versionsīrew list -versions | egrep -i "(hadoop|spark|scala|zeppelin|postgresql)" Java (TM ) SE Runtime Environment (build 1.8.0_241-b07 ) I installed Java JDK from Orcacle Spark, Hadoop, Postgresql, and Scala via homebrew and downloaded Apache Zeppelin manually. The goal of this exercise is to connect to Postgresql from Zeppelin, populate two tables with sample data, join them together, and export the results to separate CSV files (by primary key). In this post, I’ll demonstrate how to run Apache Spark, Hadoop, and Scala locally (on OS X) and prototype Spark/Scala/SQL code in Apache Zeppelin.

Running Apache Spark and Hadoop locally on OSX, prototyping Scala code in Apache Zeppelin, and working with Postgresql/CSV/HDFS data

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed